説明

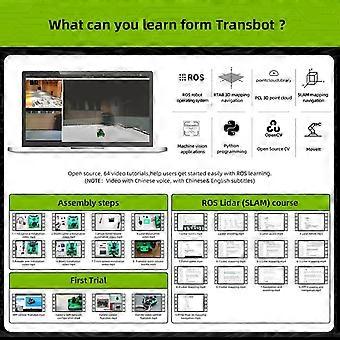

AI Robot Tank Kit with Dual Vision Lidar, Python Programmable, Tracked Autonomous Navigation

AI Vision Robot Tank Kit with Lidar and Python Programming

Overview

A modular tracked robot kit designed for learning, prototyping, and developing autonomous navigation and perception projects. Combines vision and lidar sensing with Python programmability to accelerate development of robotics applications.

Key benefits

Rapid learning and prototyping: Combines visual perception and lidar distance sensing so you can build and test real world robotics functions without assembling separate sensors from scratch.

Practical autonomy: Enables obstacle detection and distancebased navigation to reduce manual control and improve field testing reliability.

Flexible development with Python: Program behaviors, process sensor data, and integrate algorithms using Python, a widely used language for education and research.

Extensible platform: Modular design supports adding sensors, controllers, and custom modules as project needs grow.

What it does

Visual perception using a camera module for tasks such as object recognition, tracking, and scene analysis.

Lidarbased distance sensing for reliable range measurement and obstacle detection in a variety of lighting conditions.

Tracked chassis for stable movement over uneven surfaces and controlled turning for precise path following.

Python programmability for writing custom control, data processing, and logging routines.

Compatibility and software

Designed to work with common single board computers and microcontrollers when paired with appropriate connectors and drivers.

Supports Python libraries and example code to accelerate development of perception and navigation features.

Compatible with standard communication interfaces for integrating additional sensors and actuators.

Physical attributes and materials

Tracked mobile chassis optimized for traction and stability.

Durable structural materials suitable for repeated assembly and field use.

Compact footprint for indoor lab use and small outdoor testing.

Modular mounting points for sensors and extension modules.

Performance highlights

Combination of camera and lidar provides complementary sensing for robust obstacle awareness and situational understanding.

Tracked drive offers steady locomotion over varied indoor surfaces and light outdoor terrain.

Python control enables rapid iteration of algorithms and behavior tuning.

Typical use scenarios

Education and training: Handson robotics curriculum where students learn perception, sensor fusion, and programming by building and testing real projects using Python.

Prototype development: Rapidly prototype autonomous behaviors such as obstacle avoidance, waypoint following, and visionbased object tracking before migrating to larger platforms.

Research and experimentation: Test mapping, localization, and perception algorithms by combining lidar range data and camera imagery to evaluate sensor fusion approaches.

What you get from this platform

An integrated development platform that combines vision and lidar sensing with a mobile tracked base.

A programmable environment using Python that supports learning, testing, and deployment of custom robotics behaviors.

A modular kit that adapts to educational, hobbyist, and earlystage research needs without extensive hardware sourcing.

Who will benefit most

Students and educators building practical robotics lessons.

Hobbyists who want an expandable platform for learning perception and autonomous navigation.

Researchers and developers prototyping sensor fusion and control algorithms with a compact mobile base.

Specifications summary

Mobile tracked chassis for stable mobility.

Integrated vision and lidar sensing for complementary perception.

Pythonbased programming support for development and .

Modular design for adding sensors and controllers.

Getting started

Begin with included example scripts to validate sensors and basic motion.

Use Python to perception pipelines, control loops, and data logging.

Expand hardware or software components as project requirements evolve.

This kit provides a practical, programmable platform for developing and testing vision and lidar enabled robotics projects using Python in educational, prototyping, and research contexts.

-

Fruugo ID:

472203558-987727331

-

EAN:

6016494037655